Tenable has warned that romance scams are becoming more organised and scalable as criminal groups adopt AI tools for scripted messaging and fake video calls.

Satnam Narang, Senior Staff Research Engineer at Tenable, said the shift marks a more industrialised form of fraud that blends emotional manipulation with investment crime. He linked it to a wider ecosystem of scam operations that run as structured businesses and use a range of AI models.

Regulators have already signalled the scale of the damage. The US Federal Trade Commission reported USD $5.7 billion in losses from investment scams in 2024. Narang said investment fraud is often the end point for romance scams, where an online relationship becomes a pathway to financial deception.

Scaled persuasion

Tenable's assessment focuses on how AI reduces the effort needed to maintain multiple conversations. Large language models can generate fluent text and maintain a consistent narrative voice. That removes some warning signs that have historically helped users spot scams, such as poor grammar, repetitive scripts, or contradictory backstories.

Narang said the technology lets scammers manage more targets at once and sustain longer grooming periods. He said it reflects a broader cybercrime trend in which automation increases throughput and reduces reliance on individual skill.

"2026 marks our entry into a dark age of romance scams," said Narang. "The availability of powerful frontier AI models has provided digital gold for scammers. For the price of a cup of coffee, predators can now leverage these tools to generate linguistically perfect, emotionally resonant messages designed to ensnare victims across the globe," added Narang.

Deepfake verification

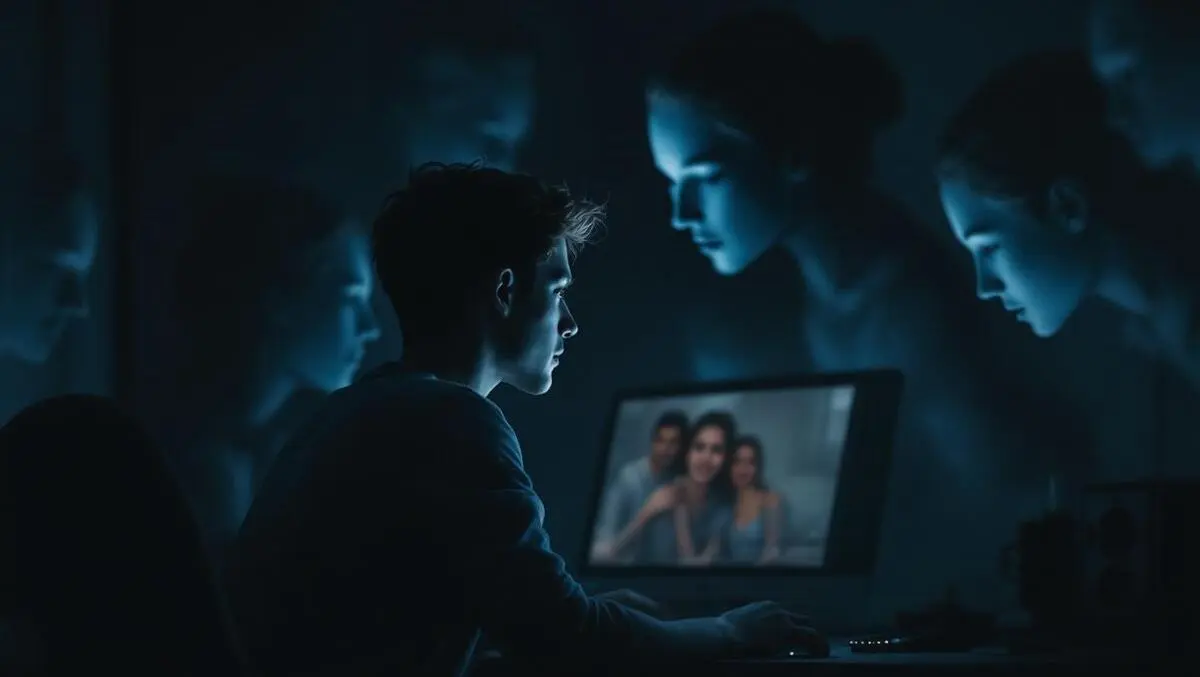

A second concern is the use of deepfake video in what Narang described as dedicated "AI Rooms". These setups are designed to defeat a common safety check recommended by many platforms and safety guides: verifying identity via video call.

Some scam operations now use face-swapping tools during live calls, Narang said, creating a convincing visual match to a stolen or fabricated persona. Tenable said virtual camera software can feed an altered video stream into mainstream calling apps.

That increases the risk that victims will treat video as proof of authenticity. It also complicates platform defences, which may need signals beyond account verification and content moderation.

"Authorities have known about these scam compounds for years. However, a recent firsthand account published by Wired exposes the scale of their evolution. The report details a meticulous AI strategy, including a dedicated 'AI Room' where deepfake technology facilitates face-swapped video calls to 'prove' the scammer's identity," said Narang.

Investment endgame

Tenable also highlighted the role of investment fraud, often described as "pig butchering", in which criminals spend weeks or months building trust before presenting an opportunity. Victims may be shown screenshots of apparent profits or directed to fake trading platforms that simulate gains.

In this pattern, the romance element serves as a credibility bridge. The pitch often arrives in casual conversation and may be framed as a personal tip rather than a hard sell. Narang said many groups now treat the relationship as the first stage of a structured financial scam.

"These scams are the engine of a multi-billion-dollar industry, often built on the backs of trafficking victims. Within specialised compounds, these individuals work high-pressure 'sales floors', where quotas play a major role and bells and gongs ring out to celebrate the theft of a victim's life savings. While romance scams and investment fraud might be tracked separately, they are intimately connected; the romance is simply the hook for fraudulent investments. In 2024, the FBI and FTC reported that investment scams accounted for $5.7 billion in losses, the highest of any category. Yet even this figure is likely a conservative estimate, as the stigma of being conned keeps many victims in the shadows," said Narang.

Open-source access

Narang also pointed to the spread of open-source AI models. He said models that can be run outside commercial services reduce friction for scammers. Commercial AI products often impose usage policies and technical controls that limit certain types of content, while locally run models do not face the same constraints.

He said this expands the low-cost toolkit available to criminal groups and reduces the risk of losing access if an account is flagged or suspended. Tenable cited DeepSeek and Qwen as examples of open-source models used in this context.

Security practitioners have raised similar concerns in other types of crime, including phishing and business email compromise. Romance scams add a heavy social element: targets are often isolated, emotionally invested, and reluctant to report losses.

Narang said increasingly realistic AI-generated audio, images, and video will further erode trust online. "As the LLMs continue to improve their audio, video and image generation, these deceptions are going to become nearly indistinguishable from reality," he said.

Safety advice

Tenable's guidance focuses on financial cues rather than conversational tone. Narang advised users to treat any investment discussion introduced by an online match as a warning sign, regardless of how credible the person seems or what "proof" of returns they provide.

"While the technology is shiny and new, this is a scenario where the old and cliche advice remains one of the key ways to thwart these types of attacks. If it sounds too good to be true, such as investment opportunities that can lead to earning thousands to hundreds of thousands of dollars, it's probably a scam. Don't be swayed by screenshots of earnings or claims of insider expertise. If a match brings up investments, whether aggressively or 'coyly', it is a scam. Cut contact, unmatch, and report," said Narang.

For platforms, the shift towards AI-generated persuasion and deepfake verification signals a more complex threat model, with scammers likely to combine automated messaging, synthetic media, and specialised operational structures in the months ahead.